Usability testing is a great way to improve the website, but it’s often thought of as an expensive money-sink in a lab. While that is something that the tests can sometimes be, that tends to be the edge-case scenario.

Usability testing is a great way to improve the website, but it’s often thought of as an expensive money-sink in a lab. While that is something that the tests can sometimes be, that tends to be the edge-case scenario.

A usability test can be an efficient, affordable way to improve a section of the website.

To ensure that you run those kinds of tests, you need to understand when and how to perform the right kinds of tests.

Website Usability Testing Basics

Usability tests are important as what people say and what people do can be very different things.

The tests are needed because watching how users interact with the website can help you fix most of the critical issues on the site in a way that split tests and multivariate tests can’t.

It doesn’t take a lot of users to learn about your site, and eye-tracking and usability labs are not always required. You can get a lot of value from just watching 12-15 users walk through an interface, given the right scenarios and goals.

Before you can plan for the right goals, you need to understand what goals are a fit for usability tests.

Difference from Split Testing

Split testing is a powerful tool, but it has its limitations. When compared with usability tests, it’s volume-oriented rather than taskflow change-oriented.

Split tests are geared towards page improvements rather than improvements to entire sections of the site (e.g. the entire checkout flow). They are also designed for statistical significance rather than specific user insights:

| Usability Test | Split Test | |

| Users | 15 | Thousands |

| Statistical Significance | Cannot be established | Must be established |

| Reason | User insights | Scientific proof |

Statistical significance is great for improving elements on a page. However, watching users try to do something on a given interface can give you specific, actionable ways you can change an entire workflow – a checkout sequence, a way to lookup contacts, a way to get to pricing.

Split tests can’t get you that.

The insights you can get from usability tests are great for improving projects at various stages of the development cycle.

Project Stages for Usability Testing

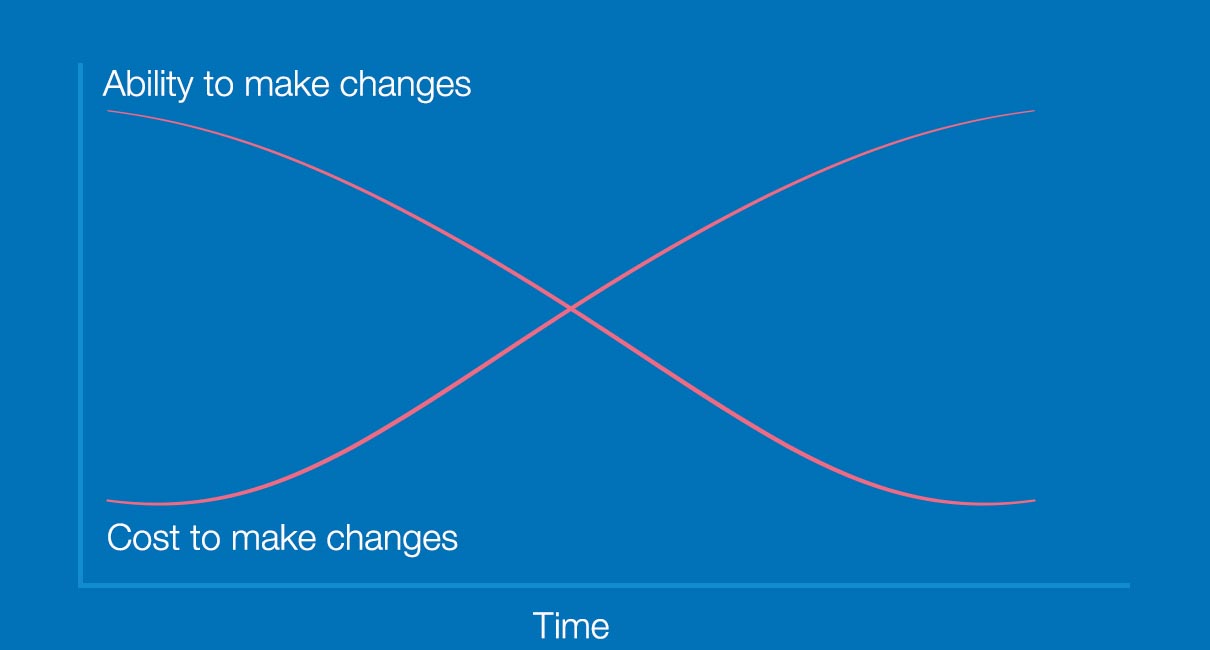

Your ability to make changes to an interface diminishes over time. Meanwhile, the cost to make changes increases over time.

Another difference between split tests and usability tests is that usability tests can be conducted at almost any stage of the project, rather than only in a production environment. You don’t need to have anything live to run usability tests.

As it gets more expensive to make changes the further along you are in the project, it’s usually a good idea to conduct the usability test early in the development phase. That said, running a test at any stage is better than not running any test at all:

Nothing built yet

You can run a usability test on a competitor’s site, on a comparable section. That will tell you what’s working and what’s not working for a similar interface, and you can avoid the pitfalls that competitors have.

Early iterations

You can use a paper prototype of your design to find critical issues before you start spending on development.

Later stage

Before you go to production, you can create a clickable prototype of the interface for testing and run tests that way. Clickable prototypes are cheaper to correct than live code, so catching issues here would be critical.

Final design

You should plan on running usability tests regularly for the weakest parts of the website, even on production.

Regardless of what stage you’ll run usability tests, you’ll need some way to prioritize. There are a few tools you can use to make that easier.

Finding Sections for Testing

To get the most bang for your buck, you need to find the areas that will move the needle the most when improved:

High failure-rate tasks

If you have a survey tool, you should use questions like “what are you trying to find on the website” and “did you find it?” to identify the task success rate. You can look at the sections with low task success rates and high traffic for usability testing candidates.

Critical paths to conversions

Paths that are particularly important for conversions make for good areas to test.

Types of Usability Tests

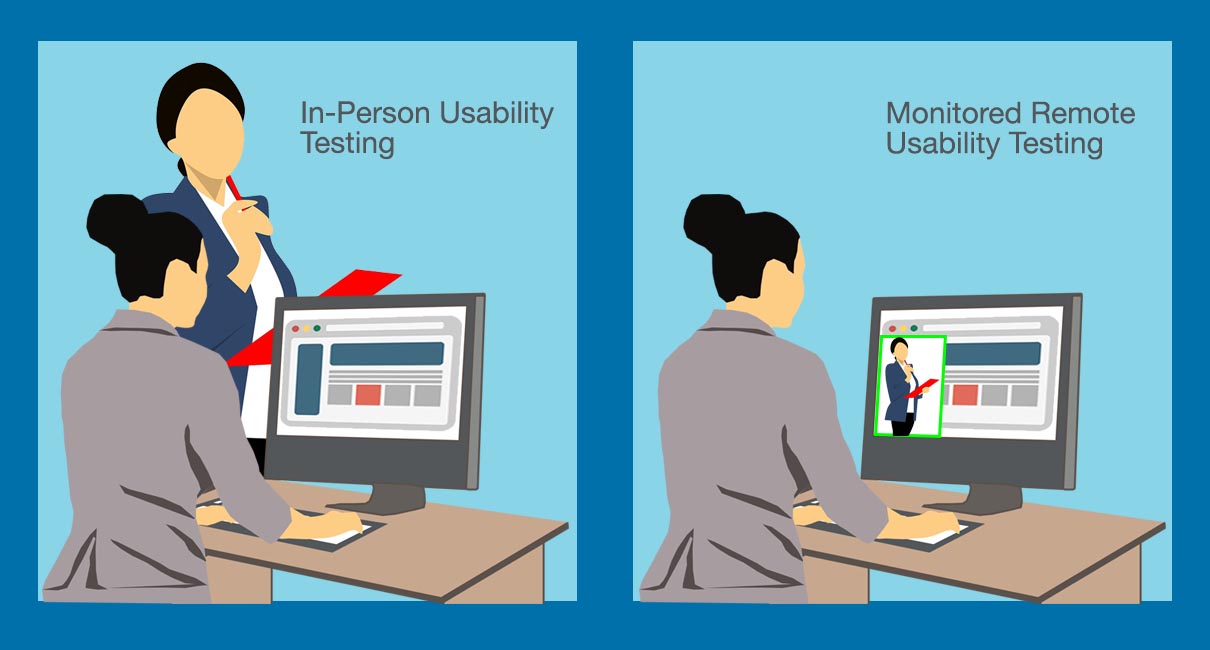

You can run usability tests either in-person or through a remote testing software, such as Skype or GoToMeeting, as long as you can record both the screen actions and the call audio.

On-location testing can be more cost-prohibitive but might allow you to observe more things.

Remote testing will allow you to conduct things on a budget. However, it will not allow you to look at things like facial expressions and body language of website users as they talk.

On-Location Usability Tests

You can hold usability tests in your company’s office, the web visitor’s environment, or in a professional usability lab. Each one has advantages and drawbacks:

You can hold usability tests in your company’s office, the web visitor’s environment, or in a professional usability lab. Each one has advantages and drawbacks:

Company office

You won’t get the eye-tracking specific observations, or see the web visitor’s actual environment when they browse to see what distractions are there. But a casual usability test in a conference room allows you to involve more people from different teams, show prototypes, and have a less formal setup that can get visitors to talk aloud.

Web visitor’s office/home

The user’s own environment can show you potential distractions they may have while navigating. It can also show you the network speed a typical potential customer might have. However, it can be cost-prohibitive to bring along multiple team members, and you will not be able to show proprietary prototypes.

Usability lab

Sessions held in usability laboratories can be cost-prohibitive and more formal than other environments, so it might be tougher to get users to talk aloud. However, you might be able to glean more information from gaze patterns using eye-tracking technologies.

On-location usability tests tend to be more expensive, but they are usually worth it, given the extra information you’ll get about your interface.

Remote Usability Tests

Remote usability tests can be run on a budget, but they tend to NOT be as data rich as their on-location cousins. For most interfaces, though, remote usability tests should be more than enough to find the most critical issues.

There are two ways to run remote usability tests:

Unmoderated

This is the cheapest way to run usability tests. You might not get your exact target users, and you might not be able to glean as much information if you’re not conducting an interview. However, it’s a fast, inexpensive way to get feedback about tasks on your site.

- An interface of your choosing gets sent to a panel of pre-selected testers. Then, you send instructions about what tasks they do. Once they perform the tasks, you get stats around their success rate and their comments.

Moderated

Moderators are a great boon for usability testing. If you can …

1. set up a GoToMeeting, WebEx, or similar meeting with a user,

2. ask for permission to record the session, and

3. ask them questions as they perform tasks on your site

… you’ll get more information than you would with an unmoderated test.

Conducting Unmoderated Remote Tests

Because unmoderated remote tests are very different from the other types of tests, let’s tackle these first.

Here’s the least you need to know about unmoderated remote tests:

- Sessions are shorter, so you’ll need to keep it pretty focused.

- Since participants will be reading tasks and attempting to conduct them, you don’t get as much insight into why they are doing what they are doing.

- Facilitators cannot interact with the participants, so figures like time on task become even more important compared to the other kinds of usability tests.

- Participants can have short post-task comments. That’s where you can glean some information about what they were thinking as they interacted with your interface.

Recruiting Participants

If you’re running on-location or remote moderated tests, you’ll need to recruit a set of participants for the usability tests.

This part can be a deterrent for some companies as it sounds expensive, but it really shouldn’t be too much of an issue. At the low end, with 15 users at 30 dollars per user, you can get participants for a full usability testing cycle for less than 500 dollars.

For in-person tests or moderated remote tests, you can get participants from a few different sources:

- Customer lists. If you have existing customer lists, you can send them invitations to participate in usability tests.

- Intercepts. If you can create intercepts on your website using tools like Ethnio or Qualtrics, you can show a usability test invitation to a fraction of your users.

You’ll usually want to offer an incentive for users to participate in a usability test. Offer anywhere from $25 to $100 in gift certificates or something similar, depending on the complexity of the test.

When you have a full set of testers, you’ll usually want a few extra “floaters.” These are people who get a smaller incentive than active testers, but can replace active testers when people become unavailable.

Grouping Usability Tests

To get the most out of participants, have only a small number testing a particular design. Four to five people should let you catch most of the issues of an interface.

The idea is to test an interface, catch most of the issues, and then do that two more times before launching the production version.

Preparing the Task

You’ll want two things for a user task:

- A section of your website, prototype, or interface to test

- A goal for the user to achieve in that section

If you run a travel site, for example, you can try to get the user to find the cheapest 3-star hotel that offers free internet access and free cancellation in Sydney for a particular date.

For that type of task, the specificity matters.

It may be easy to set the dates and find a hotel, but the filtering mechanism may be clunky for finding rooms with WiFi or free cancellation.

Depending on the type of site you have or the section you are testing, the need may be a tad more basic, especially if the part of the customer journey you’re testing tends to be early stage (i.e. “Find references in this section that talk about improving your credit score”).

Whatever combination of section and goal you end up with, you’ll need a consent form prepared, and a set of questions for your participants.

Preparing the Consent Form

The consent form can include verbiage around how you’ll use the data and how the data will not be shared with other companies, but the basic components are below:

- Participation in the test is voluntary.

- The screen and audio will be recorded.

- The participants can raise issues at any point.

- If they are uncomfortable, they can ask the researcher to stop the usability test.

Basic examples of consent forms can be found at usability.gov or from Steve Krug. You can reference those as the base and add the items you need.

Preparing Questions

The most important thing you can do while running a usability test is to get the participant to think aloud. That is, the participant should be talking about what they are experiencing while navigating your site or prototype.

To do that, you need to ask questions like the ones below:

- Where will you start looking for the information?

- Before you click on anything, can you tell me what you expect will happen?

- Did that meet your expectations?

The idea is to prod the participant to reveal how he or she is thinking about the interface without leading him or her down a particular path.

As you prepare questions for the sections that the test will run through, try to bring out how discoverable the elements are and whether or not what the participant needs is easy to find.

Make sure that you meet at least the criteria below when developing your questions:

- The writing is dry and specific, rather than cute

- No leading – avoid influencing what the user will do on a page

Running Usability Test Sessions

Before the actual usability test takes place, there are a few things you need to do:

- Ensure you can record both the audio and the screen.

- Have at least a moderator and an observer. This way, someone can take notes as the moderator asks questions.

- Welcome the participant, have the consent form signed, and give the participant the incentive. The idea is to disassociate the incentive from “doing well” on the tests.

After the consent form has been signed and while the screen to be used for the test is already up, explain to the participant that they are not getting tested. There are no right or wrong answers. The interface is getting tested, so the participant should be as candid as possible.

As you ask questions, you can echo the statements of the participant, but not leave them clues about how to find what they need while carrying out a task.

Put yourself in a position to easily assess these when the session ends:

- Time on task – how long it generally took to finish the task

- Task success rate – how many participants completed the task versus how many didn’t

- Reason for failure – the area or mechanism that participants failed to understand if they were not able to complete their task

After each task, you can also ask them to give you a subjective satisfaction score. You can use this score for debriefing sessions and directional trending as you improve the interface.

Once you’re done with all the tasks, thank the participant and start planning for the debriefing sessions.

Conducting the Debrief Sessions

The best way to make your usability tests count is to make it a spectator sport.

If you’re holding the usability testing session in your office, spend on snacks that will draw people to watch users go through – and sometimes fail to use – your interface.

There are very few things that can settle a debate faster than seeing a user flail around, not knowing what to do. It cuts through the competing internal needs to feature something, arguments about verbiage being good enough, and a range of other issues that can be tough to settle with web analytics and split testing alone.

- If you’re conducting your test on the live environment, try to come up with the biggest things that can be fixed with the least amount of effort during the debrief. That should ensure the items that need to be improved can be fixed over the course of days or weeks, rather than months (or worse, put on hold until you have a larger site redesign).

- If you’re conducting the test on a prototype, try to agree on what will be fixed for the next version of the prototype or before the go live during the debrief.

Usability Testing Basics

Most companies that are not running usability tests today should be running at least a few of them, for bigger feature releases and for issue prioritization a few times per year.

Organizations that are running these tests a few times per year should probably run more, and make the intervals consistent and structured. That way, a usability-centric culture can begin to take shape.

If you take the time to understand the advantages and disadvantages of usability tests and use the tests where appropriate, you’ll have another lever to tweak to get conversion gains.

Take your conversions to the next level.Learn how our experts at SiteTuners can help kickstart your conversion rate optimization process or get better results from your CRO efforts. Give us 30 minutes, and we’ll show you a roadmap to your digital growth! |