Summary: Asking your website visitors too many survey questions can do more harm than good. Be strategic and ask only a few – try to limit them to the 5 best website survey questions.

Web surveys can be double-edged swords. On the one hand, the tools required to conduct surveys properly come with obvious, glaring setbacks. Web survey tools don’t come cheap. Cost (and cost justification) is just one of your headaches, however. The other ones can be decidedly more fatal.

Implemented incorrectly, web surveys can hurt the user experience. Even when they’re implemented correctly, the surveys themselves sometimes serve too many masters:

- brand and Net Promoter Scores’ questions for chief marketing officers,

- visitor demographics for metrics teams, and

- usability questions for your user experience people

Of course, when all of them are in charge, you end up with a 150-question survey that only your most patient visitors will suffer through — and begrudgingly so.

There’s a better way to do this.

What you need to do is think about (and make the company think about) what the survey offers that Google Analytics, Adobe Analytics, WebTrends, or any other traffic monitoring tool will NOT tell you, namely:

-

- what visitors want,

- whether they can get to it, and

- what’s broken on your website (in their own words)

You need to drastically reduce the number of questions you ask. These are the five best website survey questions I suggest because from them you can start testing for solutions. You can have more than these, but try to bring down the total number of questions to below 15, if you can, for a healthy response rate.

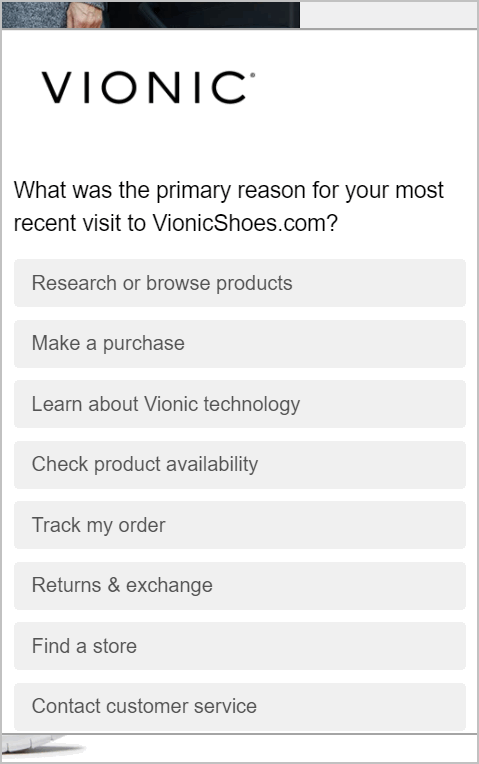

Question 1: What are you looking for on the website?

A good variant is, “Why did you come to the website?”

You think you know the user task, but unless your visitors tell you specifically what they want, and you process the data, you don’t. Traffic doesn’t give you this information. What do visitors want when they get to your product detail? Pricing? Specifications? Accessories? A/B tests don’t get you this information.

About the only thing that comes close to telling you this level of intent from a traffic analysis tool is on-site search terms. But that’s for a segment of your traffic that self-select into “askers” or experience difficulty.

You need to know this, you need to segment this, and only survey tools will tell you this.

Question 2: How did you navigate the website?

To find what’s broken on your website, you need to know what type of experience people are having. There are some ways to tell how users are navigating without asking them specifically, but it helps to have this question handy when you’re diagnosing what’s broken.

You need to provide common navigation techniques and an “other” option to classify edge cases. More on how to segment this later.

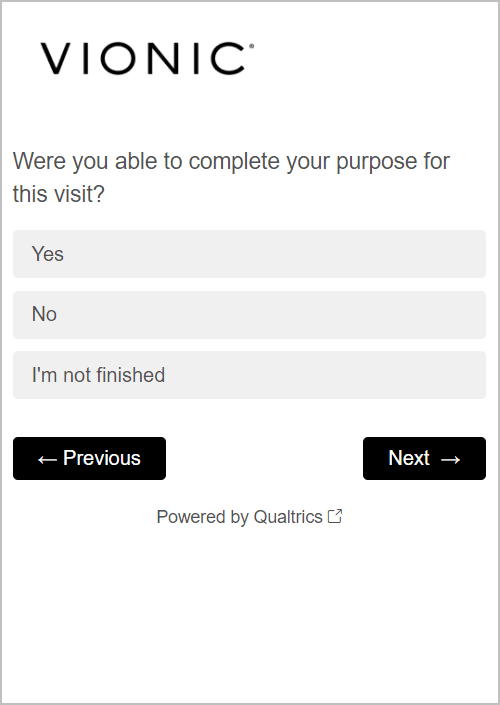

Question 3: Did you find what you were looking for?

This is arguably the most important thing there is to ask. This is the big daddy of usability questions: accomplishment rate.

If you combine this question with the user task from question one, you get task accomplishment rate. Combining the two questions will get you the things that your visitors are performing (and failing) most often. That’s the problem you need to focus on most. You can then test solutions for areas where you know you’ll move the needle.

Alternatively, you can combine this with question number two, the common navigation paths. This will get you broken navigation areas. You may be able to implement things sitewide to fix areas you found to be weak.

Accomplishment rate data, taken by itself, is just a snapshot of your site’s usability. It can be a nice thing to present to your chief marketing officer, but by itself it won’t let you solve anything. Combined with other questions, though, it’s a very actionable metric.

Learn how to identify website user intent by setting up on-site search tracking. |

Question 4: If no, why not?

Your visitors typically aren’t Steve Krug (if he is a visitor, go grab a beer and celebrate). They …

- won’t tell you how to best FIX problems

- aren’t user experience experts

- don’t devote large swaths of their brain to usability tests

You do. (You do, don’t you?)

If you listen closely to your visitors, they’ll tell you what’s wrong. If you capture their verbatim, it might provide insights into why something you worked so hard on is broken. They’ll tell you not just that your baby is ugly, but why they think that. Remember, those types of comments can be heartbreaking, but those comments are good things. They will tell you what to prioritize and fix.

Question 5: If you didn’t find what you were looking for, what did you do next?

If “did you find what you were looking for?” is about “accomplishment rate”, this question is about “opportunity cost“. If the people who don’t find what they’re looking for leave for other websites, call or email you, or do some other action, you can attribute costs to that. You can then justify budgets to management to fix the worst problems and get testing started on narrowly defined areas with very specific targets and smarter goals.

Going Beyond the 5 Best Website Survey Questions

Remember, these are the five prescribed questions – you can have a few more. But just a few.

It takes willpower and specificity to bring the number of questions down. You can have a question for satisfaction for the brand team, as long as you keep the number manageable. You can have a “new vs. returning visitor” for your traffic measurement team (bonus points if you just get a cookie to tell your survey tool this without bothering the visitor).

However, the five best website survey questions will tell you what to test, what to fix, and ultimately, let you focus on things that will move the needle.

If you have a survey tool and you’re not asking these questions, it’s time to look at what questions you can remove, so you can ask the things all online marketers need answers for. If you don’t have a survey tool, you may want to look at Google Consumer Surveys for surveys on a budget, or for iPerceptions, ForeSee, and OpinionLab for moderate to large survey budgets.

(Read “Web Survey Tools 101: From Pricing to Execution” for a list of survey tools you can consider.)

This article originally appeared in Tim Ash’s Total Retail column in December 2013. It has been updated to reflect marketing tools in 2020 and to include images of sample survey questions.