Summary: Large language model (LLM) AI – like ChatGPT – is all the rage right now, disrupting several industries, including online marketing. However, while AI-powered chatbots can generally help marketers work more efficiently, they have pitfalls users should be aware of. Digital marketers will do well to understand where this technology excels and where it’s likely to fail.

In this article, we’ll discuss the basics of LLM AI, particularly ChatGPT, and its strengths and weaknesses in the context of online marketing:

Introduction: The State of Large Language Model AI

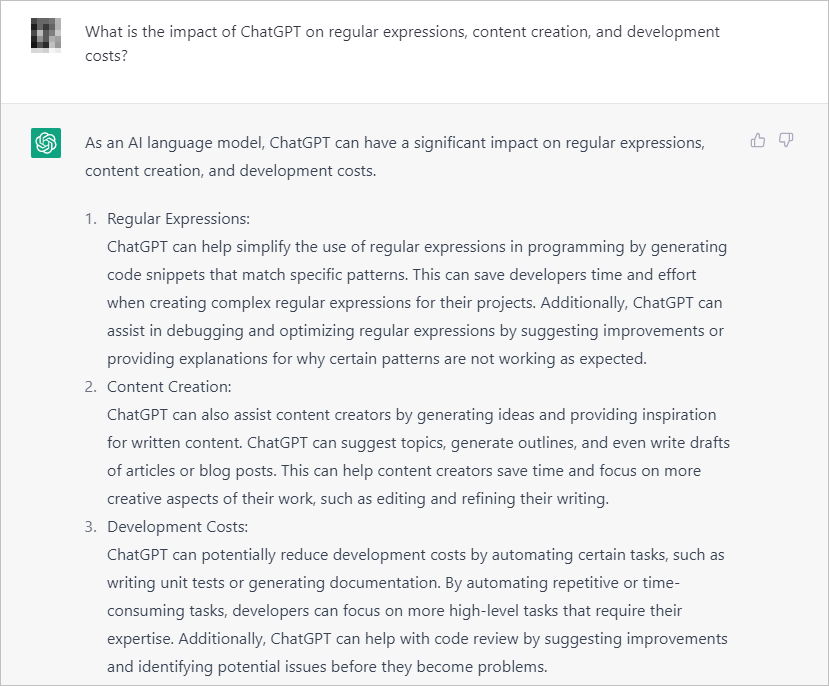

When asked what ChatGPT’s impact is on regular expressions, content creation, and development costs, the tool details ways it can help content creators and developers.

Even though it doesn’t feel like it, artificial intelligence (AI) in the form of large language models (LLMs) – the type of systems used by technologies like ChatGPT, Bing AI, and Google Bard – has been around for years. GPT-2, for instance, was released by OpenAI in February 2019.

What has really changed since December 2022 is that AI exploded into public consciousness:

- ChatGPT, the poster child of this AI revolution, is the first system to reach 100 million users in 2 months.

- Microsoft’s Bing, while still a smaller player in the search space, crossed 100 million users with the assist from Bing AI chat.

- Traditional media sites that generally wouldn’t cover specific digital marketing news like the launch of GA4 – institutions like The New York Times, The Wall Street Journal, etc. – are all watching the developments with bated breath.

For executives, analysts, and marketers in general, it pays to understand the basics of what this technology is, how it functions, what the pitfalls are, and how you can use it to benefit your business.

Let’s dive in.

What Is a Large Language Model or LLM?

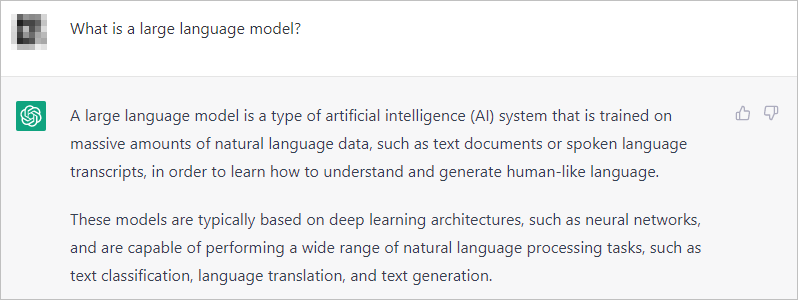

ChatGPT’s response when asked what a large language model is: “ … a type of AI system that is trained on massive amounts of natural language data, such as text documents or spoken language transcripts, in order to learn how to understand and generate human-like language. These models are typically based on deep learning architectures, such as neural networks, and are capable of performing a wide range of natural language processing tasks, such as text classification, language translation, and text generation.”

As ChatGPT already notes, a large language model “learns” by getting fed training data. This consists of Wikipedia articles, books, text from various parts of the web, etc.

It then uses that training data and probabilities to generate responses.

GPT-3, the previous model that ChatGPT used, was trained on 300 billion words. The data used to feed GPT-3 does not automatically refresh itself. It is not like Google, Bing, and other search engines in that regard. There’s no active crawl refreshing the index of training data.

The cutoff for ChatGPT data is September 2021. So, if you ask a chatbot using GPT-3 anything about 2022 or 2023 events, it would largely be unable to talk about that.

What Is the GPT in ChatGPT?

ChatGPT explains that GPT “refers to a type of large neural language model developed by OpenAI that uses the transformer architecture and is trained on massive amounts of text data using unsupervised learning … ChatGPT is an instance of the GPT architecture that has been fine-tuned specifically for conversational purposes.”

ChatGPT explains that GPT “refers to a type of large neural language model developed by OpenAI that uses the transformer architecture and is trained on massive amounts of text data using unsupervised learning … ChatGPT is an instance of the GPT architecture that has been fine-tuned specifically for conversational purposes.”

Generative pre-trained transformers (GPT) are a family of language models trained on a large set of text data. The idea is for the system to be able to use that data to write in natural language. The responses can be used for various things. And in marketing, there are a healthy set of potential use cases:

- Learning about a particular topic

- Getting a “copilot” to help generate HTML, JavaScript, or related code

- Writing regular expressions for tools like Google Analytics

- Using the tool as a proofreader or writing assistant

There are advantages and pitfalls that need to be explored in those areas. But before you weigh those for your business, you need to understand what tasks GPTs excel in and what tasks they generally fail at.

Is ChatGPT Accurate?

If you look at some of the headlines from media sites like CNN, ChatGPT and related technologies can seemingly do just about everything:

But anyone who has played around with this technology even a little understands that it’s not quite that simple.

And it’s not that simple largely because of 2 things: hallucinations and limited data sets.

What Are Hallucinations in GPTs?

ChatGPT talks about hallucinations in GPTs, saying they “refer to generated text that is not consistent with the input or the context of the task at hand … In some cases, hallucinations can be caused by the GPT’s ability to remember and reuse information from the training data, even if that information is not relevant to the current task.”

AIs like ChatGPT, Bing AI, and Google Bard can all give out confident answers about a person or topic that are completely wrong or “invented.” In the context of AI, these are often called hallucinations.

For instance, if you ask ChatGPT to talk about details of people who don’t qualify as celebrities or topics where its data set is lacking, it’s likely to generate a response that is invented, while not adding the caveat that it’s not confident about its answer.

It’ll do so without understanding whether what it is saying is completely accurate, fully invented, or outright wrong.

What Are the Implications of Limited Data Sets in GPTs?

Large language models can describe certain parts of the world, but you can quickly run into limitations:

ChatGPT answers the question “What year is it?” correctly. However, when asked who the Vice President of the US is in 2023, it responds with “I’m sorry, but as an artificial intelligence language model, I cannot predict the future, and my training only goes up until 2021. Therefore, I cannot provide information about who the Vice President of the United States may be in 2023 or any future year beyond my knowledge cutoff date.”

ChatGPT answers the question “What year is it?” correctly. However, when asked who the Vice President of the US is in 2023, it responds with “I’m sorry, but as an artificial intelligence language model, I cannot predict the future, and my training only goes up until 2021. Therefore, I cannot provide information about who the Vice President of the United States may be in 2023 or any future year beyond my knowledge cutoff date.”

For ChatGPT, this means getting data sets in bulk, with no active crawls.

This is important context when you’re using it to help seed ideas for content generation. It is critical to fact-check information that comes from a large language model, especially if it’s about a field that you are not an expert on.

ChatGPT and Online Marketing: Can ChatGPT Help Marketers Succeed?

If you understand the limitations of ChatGPT and where it’s likely to stumble, you can formulate use cases for it in areas where it’s likely to do well.

- You’re not likely to be able to use it for pure content generation minus a writer. It will hallucinate facts and damage your brand.

- If you expect it to do all the thinking for you when it comes to development, you will be sorely disappointed.

But it can impact those areas significantly in a blended human plus AI approach.

Get your website visitors to take your desired action.

Can ChatGPT Shorten My Company’s Development Time?

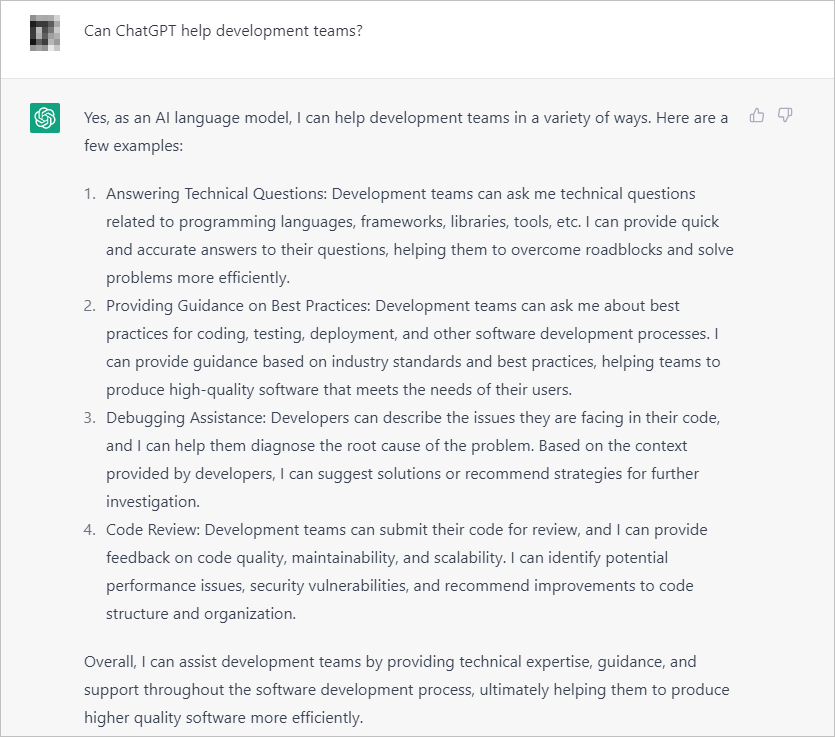

ChatGPT responds in the affirmative when asked if it can help development teams. It lists and describes different ways it can help: answering technical questions, providing guidance on best practices, debugging assistance, and code review.

ChatGPT responds in the affirmative when asked if it can help development teams. It lists and describes different ways it can help: answering technical questions, providing guidance on best practices, debugging assistance, and code review.

ChatGPT can …

- produce snippets of code based on your prompt,

- provide generally good answers to technical questions, and

- help out with debugging

As is always the case with LLMs, your team needs to check if the output works.

The other thing to note is that you can ask it to explain why it produced the code it did. And you can use more prompts to change the approach.

Depending on the maturity of your development team, this (or other tools like GitHub Copilot) may save you a ton of time per feature release. That should help various teams produce more work given the same amount of time.

Can ChatGPT Impact How I Use Tools like Google Analytics?

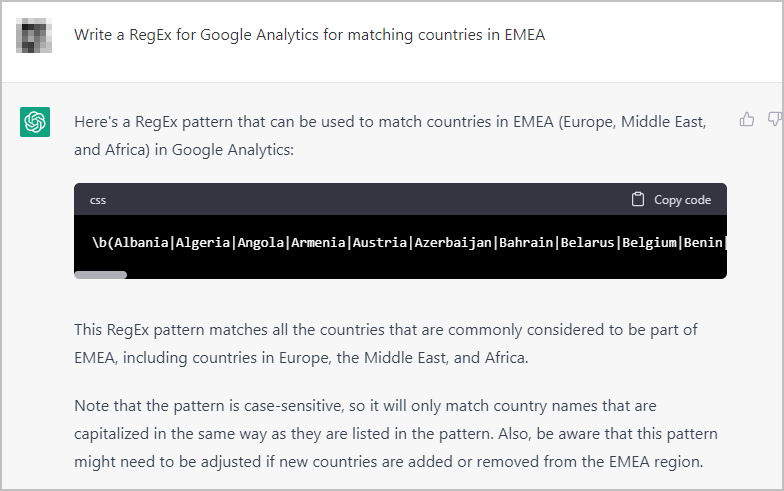

ChatGPT provides a RegEx pattern that can be used to match countries in Europe, the Middle East, and Africa when prompted to “Write a RegEx for Google Analytics for matching countries in EMEA”.

The proficiency of your marketing team can impact their ease or difficulty in using advanced features of tools like Google Analytics.

One feature that tools like ChatGPT may help with is Advanced Segments.

When filtering down to the data you need, you’re sometimes required to use Regular Expressions (RegEx). If you have a relatively novice team, they may find it difficult to get a RegEx that matches what they need it to do.

In this case, if you can think of what you want in natural language, the LLM may be able to help you write the RegEx you need from just a description of the segment you want.

This should help relegate the more tedious parts of web analytics to AI. That way, your analysts can focus on finding insights from data, instead.

ChatGPT and Online Marketing: Can It Improve My Content Marketing Efforts?

Let’s say your company sells ergonomic equipment for the workplace, and you want some ideas for your blog.

You can ask tools like ChatGPT to generate ideas about viable topics:

ChatGPT lists potential topics with corresponding descriptions when prompted to “Come up with a few good topics for a blog about ergonomics in corporate environments, with short descriptions about what the blog entry can be about”.

From there, you can go to tools like Google Ads Keyword Planner to check if …

- any of the topics have valuable target terms

- the potential audience size is good enough for the investment in content creation

But ChatGPT doesn’t stop at idea generation.

It can be your copilot when writing about certain things. If you want a sentence or two rephrased, you can input specific parts of your draft and ask ChatGPT to come up with alternative versions:

ChatGPT paraphrases a statement when prompted to “Rework this sentence”.

ChatGPT paraphrases a statement when prompted to “Rework this sentence”.

You can also use ChatGPT to write long-form content with very targeted prompts. But the editing time could potentially not be worth it.

So yes, ChatGPT can help with content marketing. This comes with the typical caveat, however, that the AI chatbot isn’t smart enough to know when it’s likely to invent names of people who do not exist, accounts of things that didn’t happen, etc.

It’s best used in small doses for idea generation and reworking an angle to make a capable writer generate content faster.

What about Google Bard, Bing AI, and Other LLMs?

Both Bing AI and Google Bard have many of the same pitfalls as ChatGPT, especially around hallucinations.

Bing AI tends to give shorter responses at base settings while citing references from the web.

Google Bard has just been recently released, but should definitely be tested by companies looking to do things more efficiently.

Can LLM AI like ChatGPT Help Me Run an Online Marketing Function Better?

The short answer is yes, with caveats.

The longer answer is that while there are obvious benefits, you can shoot yourself in the foot if you don’t know what you’re doing. You should start with areas where tools like ChatGPT perform relatively well, with minimal risk of hallucinations:

- idea generation

- reworking sentences

- RegEx for tools like Google Analytics

- code assistance