Why CRO A/B Testing is Essential for Business Growth

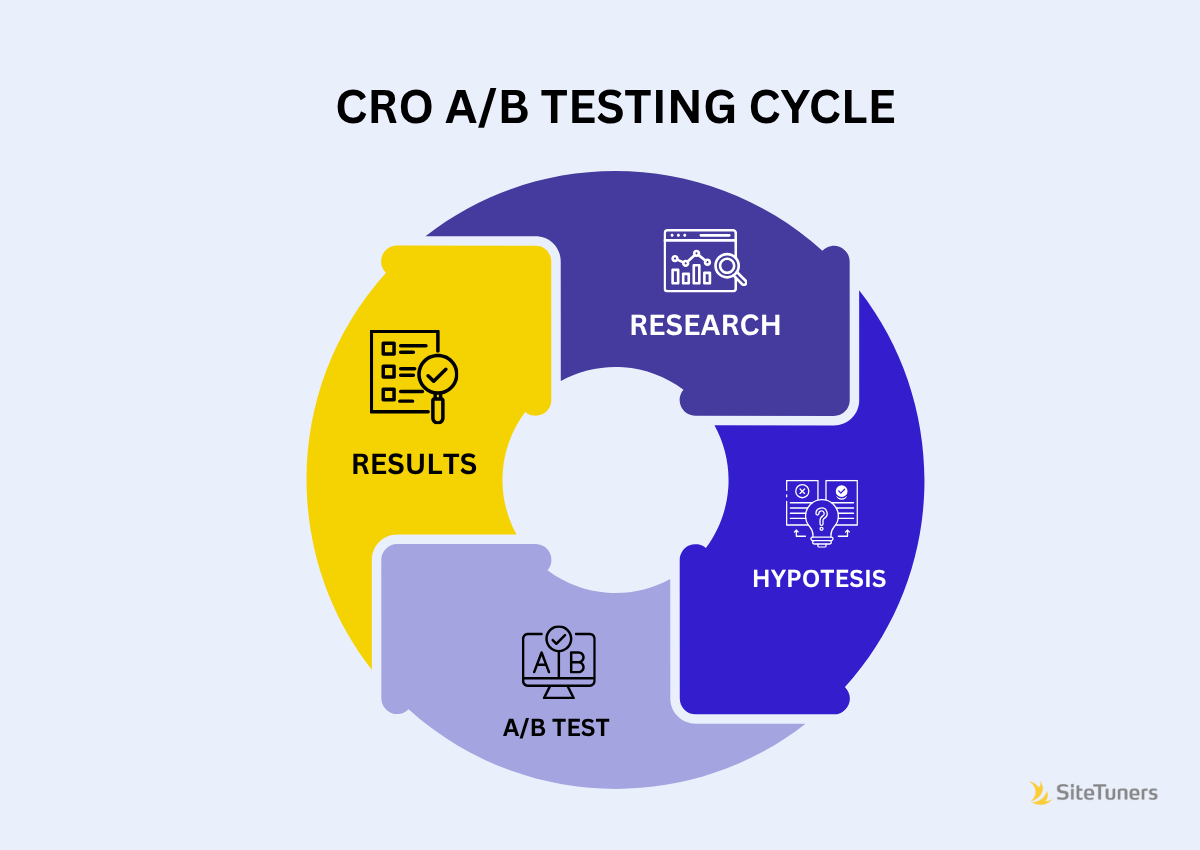

CRO A/B testing uses controlled experiments (A/B tests) to validate hypotheses within a broader Conversion Rate Optimization (CRO) strategy. It’s a powerful feedback loop where CRO identifies opportunities and A/B testing validates them with data.

- CRO: The strategic process of improving your website to increase desired user actions.

- A/B testing: A method used in CRO to compare two versions of an element and see which performs better.

- Key Insight: A/B testing is not CRO; it’s a critical tool within the CRO process.

If your traffic is growing but conversions are flat, guesswork isn’t the answer. Changing button colors or headlines without understanding why visitors aren’t converting is a shot in the dark. The reality is that only one in eight A/B tests yields significant results, often because teams lack a strategic framework and test random elements without proper research.

The solution is to treat CRO as the strategy and A/B testing as the validation. CRO provides the research and analysis to identify what to test and why, while A/B testing offers the scientific proof that a change improves performance.

This combination is transformative. Companies like Google, Amazon, and Netflix run thousands of experiments annually, building cultures where data replaces hunches.

I’m Jeff Loquist, Senior Director of Optimization at SiteTuners. With 18 years of industry experience, I’ve seen how systematic experimentation turns visitors into customers. This guide shares the proven framework we use at SiteTuners to build sustainable, data-driven growth engines.

CRO vs. A/B Testing: Understanding the Core Difference

People often use “CRO” and “A/B testing” interchangeably, but they are not the same. Confusing them is like thinking a hammer is the only tool you need when building a house; you need the hammer, but it’s just one tool in a much bigger project.

- CRO is your strategy. It’s the big-picture process of understanding why visitors aren’t converting. CRO uses psychology, data analysis, and UX design to answer the “what” and “why” of user behavior.

- A/B testing is your tactic. It’s the experiment you run within your CRO strategy to validate ideas. When CRO research identifies a problem and proposes a solution, A/B testing proves if it works. It answers “how” you know a change will improve performance.

_compressed.png?alt=media&token=bb4c2867-3995-4f63-8d62-063867271226)

For example, your CRO research might find a pricing page is confusing. You hypothesize that simplifying it from five tiers to three will increase sign-ups. CRO A/B testing is how you validate that hypothesis by showing both versions to users and letting data decide the winner. This creates a powerful feedback loop where research informs tests, and test results (win or lose) inform future strategy.

Common CRO Myths Debunked

Let’s clear up some common myths:

- “A/B testing is the same as CRO.” False. A/B testing is a tool used within the broader CRO process, which also includes research, analysis, and strategy.

- “CRO is just changing button colors.” While small tweaks can be part of it, mature CRO programs optimize entire user flows, address major friction points, and influence strategic decisions like pricing and site architecture.

- “A/B tests are too slow or risky.” Poorly designed tests can be, but properly executed tests provide the confidence to make changes quickly with data-backed decisions. You test changes on a portion of your traffic to measure impact before a full rollout, reducing risk.

To make this crystal clear, here’s how CRO and A/B testing compare:

| Feature | Conversion Rate Optimization (CRO) | A/B Testing |

|---|---|---|

| Scope | Broad, strategic, holistic process encompassing the entire user journey. | Narrow, tactical method for comparing specific variations of an element. |

| Goal | Improve the overall percentage of users completing desired actions; improve user experience. | Validate specific hypotheses by determining which version of an element performs better against a defined metric. |

| Approach | Multidisciplinary (analytics, UX, psychology, copywriting); research-driven; iterative. | Scientific experiment; controlled comparison; statistical analysis. |

| Output | Deeper understanding of user behavior; optimized user flows; increased conversion rates; business growth. | Data-driven decision on which variation to implement; insights into user preferences for a specific change; validation of hypotheses. |

| Role | The “What” and “Why” (identifying problems, forming strategy). | The “How” (validating solutions, measuring impact). |

Understanding this distinction is fundamental. When you treat CRO as the strategy and A/B testing as a validation tool, you stop guessing and start building a systematic approach to growth.

The 6-Step CRO A/B Testing Playbook: From Hypothesis to High Conversions

Effective CRO A/B testing follows a systematic process that transforms insights into measurable growth. This is the exact framework we’ve refined at SiteTuners over two decades. It’s a continuous cycle of learning that turns “I think this might work” into “I know this works.”

_compressed.png?alt=media&token=95ad96d3-b25a-4499-a3f6-de9e4e47a891)

Step 1: Research and Identify Opportunities

Most optimization efforts fail because they skip this step. We start by gathering data to diagnose the problem before prescribing a solution.

- Quantitative Data (the “What”): We use tools like Google Analytics to find drop-off points in funnels, pages with high bounce rates, and other problem areas.

- Qualitative Data (the “Why”): We use heatmaps, session recordings, and user surveys to understand user behavior and uncover pain points that numbers alone can’t explain.

Combining these data sources reveals patterns and provides the foundation for strong hypotheses.

Step 2: Formulate a Strong Hypothesis

A hypothesis is a structured prediction, not a random guess. We use a simple framework: “If we [make this change], then [this outcome] will occur because [this reason].”

For example: “If we add trust badges above the payment form, then checkout completion will increase because it will reassure users concerned about security.” This specificity is crucial. We then prioritize test ideas using frameworks like PIE (Potential, Importance, Ease) to focus on high-impact experiments.

Step 3: Create Variations and Determine Sample Size

Here, we create the test variations. The control (Version A) is the current page, and the variation (Version B) includes the proposed change. A critical principle is to isolate one variable at a time to know what caused the change in performance.

Next, we calculate the required sample size using tools like Optimizely’s sample size calculator. This ensures we run the test long enough to achieve statistical significance and avoid making decisions based on statistical noise.

Step 4: Run the Experiment

We use specialized CRO A/B testing tools to randomly split traffic between versions and track metrics. Randomization is crucial to eliminate bias.

We typically run tests for at least two full business weeks to account for variations in user behavior by day of the week. Low-traffic sites may require longer tests. It’s also vital to avoid external factors like holidays or major ad campaigns that could skew results. Resist peeking at results and making premature decisions.

Step 5: Analyze the Results

Once the test is complete, we analyze if the difference in performance is statistically significant—meaning it’s not due to random chance. We typically look for 95% statistical significance (a p-value of less than 0.05). Tools like VWO’s statistical significance calculator can help with this analysis.

There are no failed tests in CRO. A losing test still provides valuable insights into user behavior. This cumulative knowledge is what makes an optimization program so powerful.

Step 6: Implement Winners and Leverage Insights

When a variation wins with statistical significance, we deploy it to 100% of traffic and continue to monitor its performance. The most crucial part of this step is documenting everything. We record what we learned from both winning and losing tests to create a shared knowledge base.

This documentation prevents repeating mistakes and allows insights to be shared across the organization. This iterative process of testing, learning, and improving drives continuous, sustainable growth.

Advanced Techniques and Best Practices

As your CRO A/B testing program matures, you can move from basic experiments to advanced techniques that open up deeper insights.

_compressed.png?alt=media&token=0abfbf2a-3804-49ef-9f75-def4689c78cb)

Types of Testing: Beyond a Simple A/B

While standard A/B testing is the foundation, other methods can be more effective in certain situations.

- A/B/n Testing: Tests multiple variations (B, C, D, etc.) against the control (A) at once. It’s efficient for high-traffic sites with several distinct ideas for one element.

- Multivariate Testing (MVT): Tests multiple elements simultaneously (e.g., headline and CTA) to see how they interact. MVT requires very high traffic to be effective.

- Split URL Testing: Compares two completely different page designs hosted on separate URLs. It’s ideal for major redesigns.

- Multi-armed Bandit Testing: Uses machine learning to dynamically shift traffic to better-performing variations during the test, maximizing conversions in real-time.

Best Practices for Designing Effective Experiments

Proper test design is critical for reliable results.

- Test Duration: Run tests long enough to achieve statistical significance, typically at least two weeks. Ending tests early is a common and costly mistake.

- Isolate Variables: In A/B tests, change only one element at a time to know what caused the result. Use MVT for testing multiple elements if you have enough traffic.

- Validate Your Setup: Run an A/A test (showing two identical versions) to confirm your tools are working correctly without bias.

- Consider Timing: Avoid running tests during holidays or promotions that alter normal user behavior.

- What to Test: Focus on high-impact elements like headlines, calls-to-action (CTAs), forms, page layout, and social proof (testimonials, reviews, trust badges).

The Role of Data and Statistics in CRO A/B Testing

Statistical rigor separates scientific optimization from guesswork. Beyond statistical significance (typically 95% confidence), consider statistical power (usually 80%), which is the probability of detecting a real effect. While there are different statistical approaches (Bayesian vs. Frequentist), the goal is to avoid confirmation bias and let data, not hope, guide decisions. Only about one in eight A/B tests yields a significant win, but every test provides valuable learnings. Use tools like VWO’s statistical significance calculator to ensure your analysis is sound.

Applying A/B Testing in a Mobile App Context

CRO A/B testing is just as valuable for mobile apps, with some unique considerations.

- Testing Types: Use server-side testing for core functionality changes and client-side testing for UI/UX tweaks.

- Mobile UI/UX: Pay close attention to touch targets (e.g., button sizes of at least 44×44 pixels per Apple’s Human Interface Guidelines), font legibility, and simplified navigation.

- Platform-Specifics: Adhere to platform design guidelines (Material Design for Android, HIG for iOS) and test across a wide range of devices.

Building a Successful CRO Program

A successful CRO A/B testing program is about more than tools; it’s about people, process, and culture. Success comes from assembling the right team and fostering an environment where data-driven experimentation thrives.

_compressed.png?alt=media&token=8d771a93-0bde-4a02-8460-f1bc3c757d82)

The Dream Team: Essential Skills and Roles

A great CRO program requires a team with diverse skills working in harmony.

- CRO Strategist/Manager: Sets the overall direction and prioritizes tests.

- Data Analyst: Digs into data and calculates statistical significance to provide insights.

- UX/UI Designer: Creates user-friendly variations that bring hypotheses to life.

- Copywriter: Crafts persuasive messages that guide users to action.

- Developer: Implements test variations precisely and without bugs.

- Project Manager: Coordinates the team and keeps the testing pipeline moving.

Not every business can build this team in-house. If you face budget or hiring constraints, working with a conversion rate optimization agency can provide the expertise you need without the overhead.

Fostering a Data-Driven Experimentation Culture

The real change occurs when CRO A/B testing becomes part of your company’s DNA, as seen at companies like Google and Netflix that run thousands of experiments annually.

Building this culture involves several key shifts:

- Get Leadership Buy-In: When leaders champion data-driven decisions, the entire organization follows.

- Celebrate Learnings, Not Just Wins: Since only about one in eight tests produces a significant win, it’s crucial to value the insights gained from every experiment, win or lose.

- Create a Knowledge Base: Document every test, result, and learning to build institutional memory and avoid repeating mistakes.

- Empower Teams to Test: Give teams the autonomy to run experiments within a structured framework to foster curiosity and ownership.

The goal is to move from opinions to data. When “Let’s test it” becomes the default response to a new idea, you’ve built a culture of experimentation that drives sustainable growth.

Frequently Asked Questions about CRO and A/B Testing

Here are answers to the most common questions we hear about CRO A/B testing.

What is the fundamental difference between CRO and A/B testing?

CRO is the overall strategy for improving conversions. It involves research, analysis, and forming hypotheses to understand the “what” and “why” of user behavior. A/B testing is a tactic within that strategy – a controlled experiment to validate a hypothesis and measure the impact of a specific change. In short, CRO is the plan, and A/B testing is the proof.

How long should an A/B test run?

A test should run long enough to reach statistical significance and cover a full business cycle, which is typically at least one to two weeks. The exact duration depends on your site’s traffic and conversion rate; lower-traffic sites will need longer tests. The biggest mistake is ending a test too early, which leads to unreliable conclusions.

What tools are essential for a CRO A/B testing program?

A complete CRO A/B testing toolkit includes three types of tools:

- Quantitative Analytics Platforms: Tools like Google Analytics to understand the “what” (e.g., where users drop off).

- Qualitative User Behavior Tools: Tools like Hotjar for heatmaps and session recordings to understand the “why” behind user actions.

- A/B Testing Platforms: Dedicated software to run experiments, split traffic, and analyze results.

You’ll also need calculators for sample size and statistical significance, such as those from Optimizely and VWO. If managing this toolkit seems daunting, our conversion rate optimization services can provide the foundation you need.

Conclusion: Transform Your Website into a Conversion Machine

The key takeaway is this: CRO A/B testing is the engine for sustainable online growth. It combines the strategic direction of Conversion Rate Optimization with the scientific validation of A/B testing. This approach moves you from guesswork to data-driven decisions.

By following a systematic process – research, hypothesize, test, analyze, and iterate – your website becomes a dynamic conversion machine that continuously learns and improves. Every test, win or lose, builds your understanding of what truly resonates with your audience.

At SiteTuners, we’ve perfected this methodology since 2002, helping over 2,100 clients turn their websites into revenue generators by replacing internal opinions with data-backed insights. The question isn’t if this process works, but if you’re ready to implement it.

Ready to take the guesswork out of your marketing and make decisions that drive real results? Explore our conversion rate optimization services and find your website’s untapped potential.